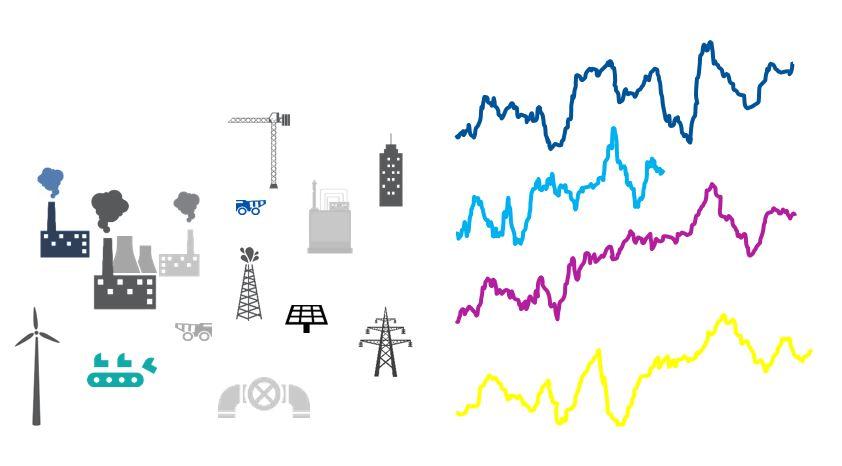

Is McGuyvering the right approach for your cloud data warehouse?

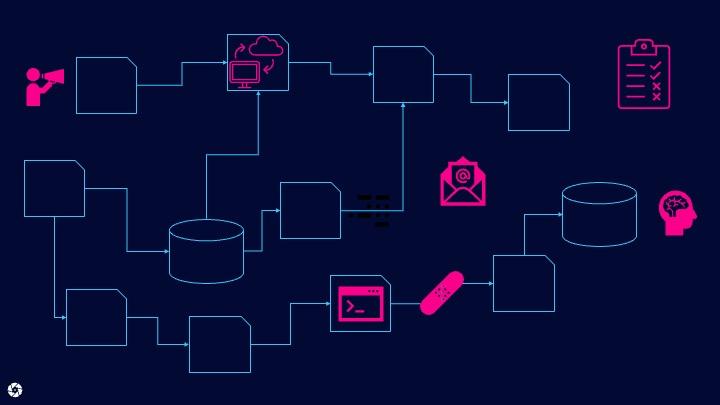

Developing & operating modern cloud data warehouses requires the right tools to handle the various complex associated tasks. Cutting corners by leveraging a concoction of disparate tools puts your investment at risk.